On my quest to learn more about Azure’s native Artificial Intelligence capabilities I am aiming to have a run through of each component and look to see how each can be utilised.

Azure Content Moderator is a Machine Assisted set of content moderating API’s as well as a human readable review tool that you can utilise with images, videos and text in order to provide an action in your program against potentially offensive, risky or undesirable content.

You can read more about Content Moderator here: https://docs.microsoft.com/en-us/azure/cognitive-services/content-moderator/overview

For the purposes of this brief blog article I am going to show how to set up a Content Moderator then utilise the subscription key and the API Testing Console.

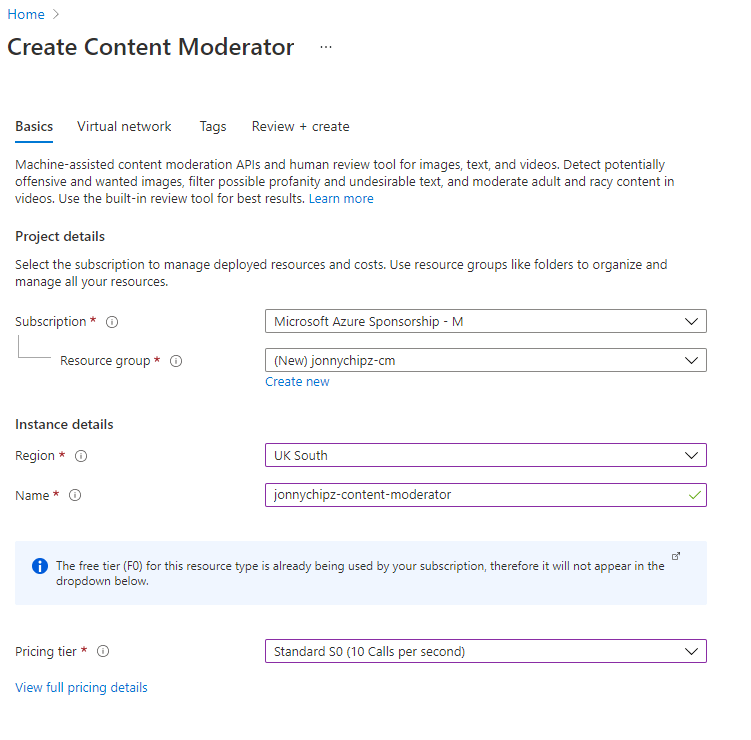

Create Content Moderator in your Azure Subscription

First off, we are going to create a Content Moderator with the free SKU as follows from within the Azure Portal

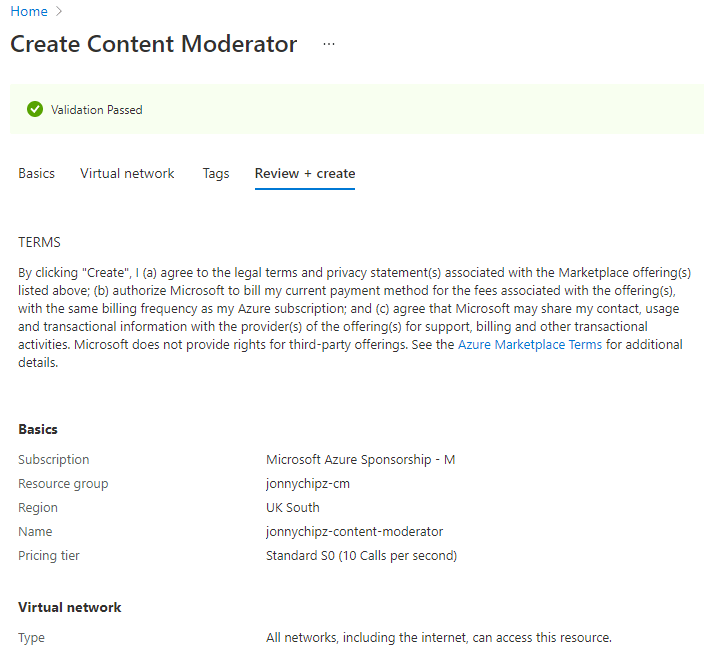

We have the option to limit the accessibility of the Content Moderator to No networks, selected networks or All networks. For the purposes of this article I will leave the default of All Networks:

You can add Tags to your resource if you need to or select ‘Review and Create’

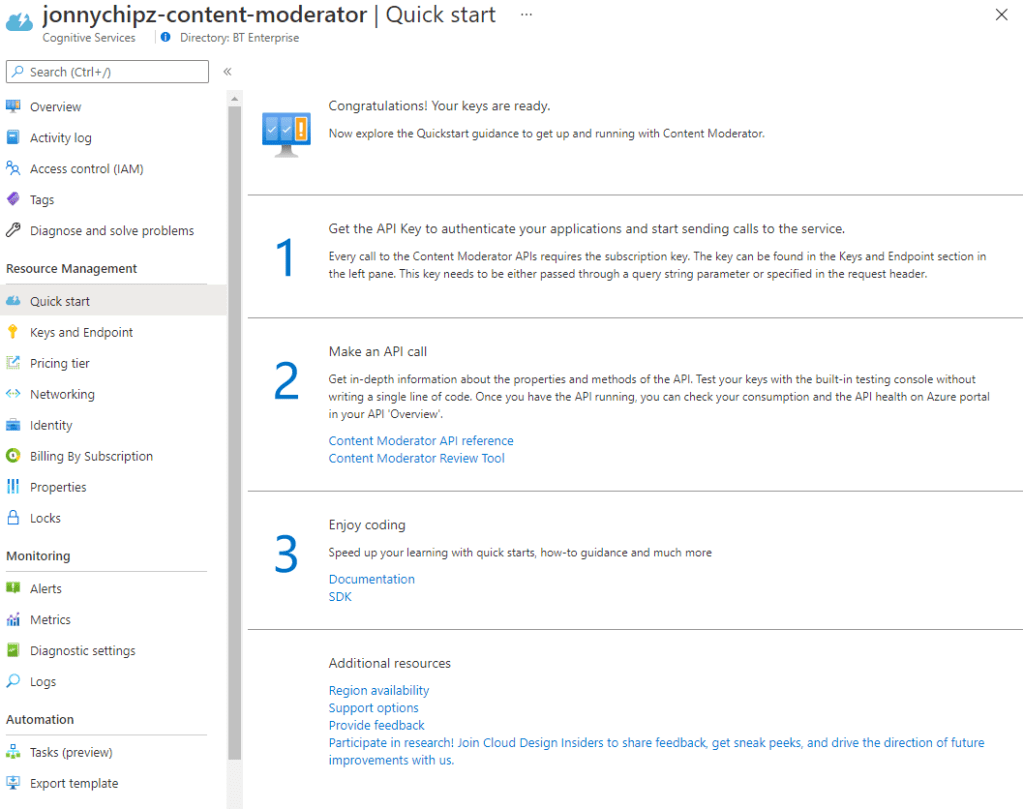

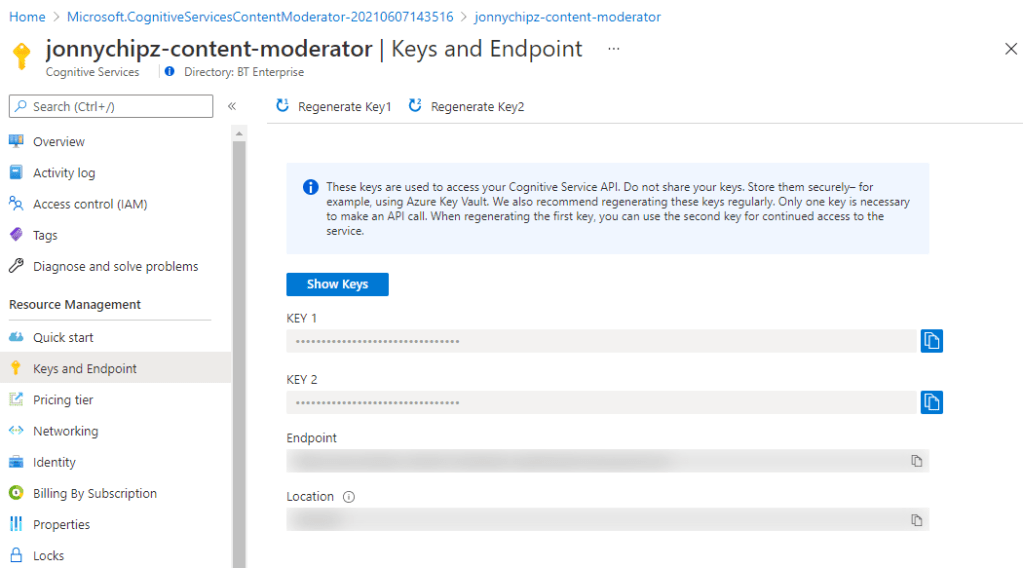

Once the Content Moderator has been deployed you will be presented with this screen:

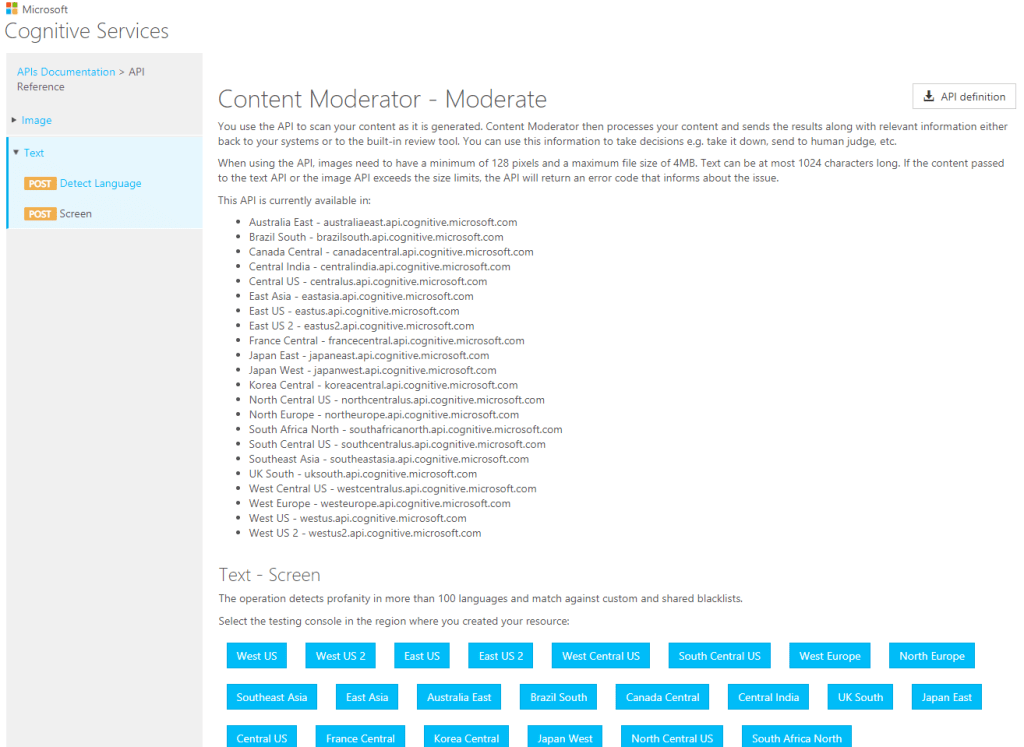

Content Moderator API Reference Page

Browse to the following page: https://westus.dev.cognitive.microsoft.com/docs/services/57cf753a3f9b070c105bd2c1/operations/57cf753a3f9b070868a1f66f

As I set up my Content Moderator in UK South, I will select this:

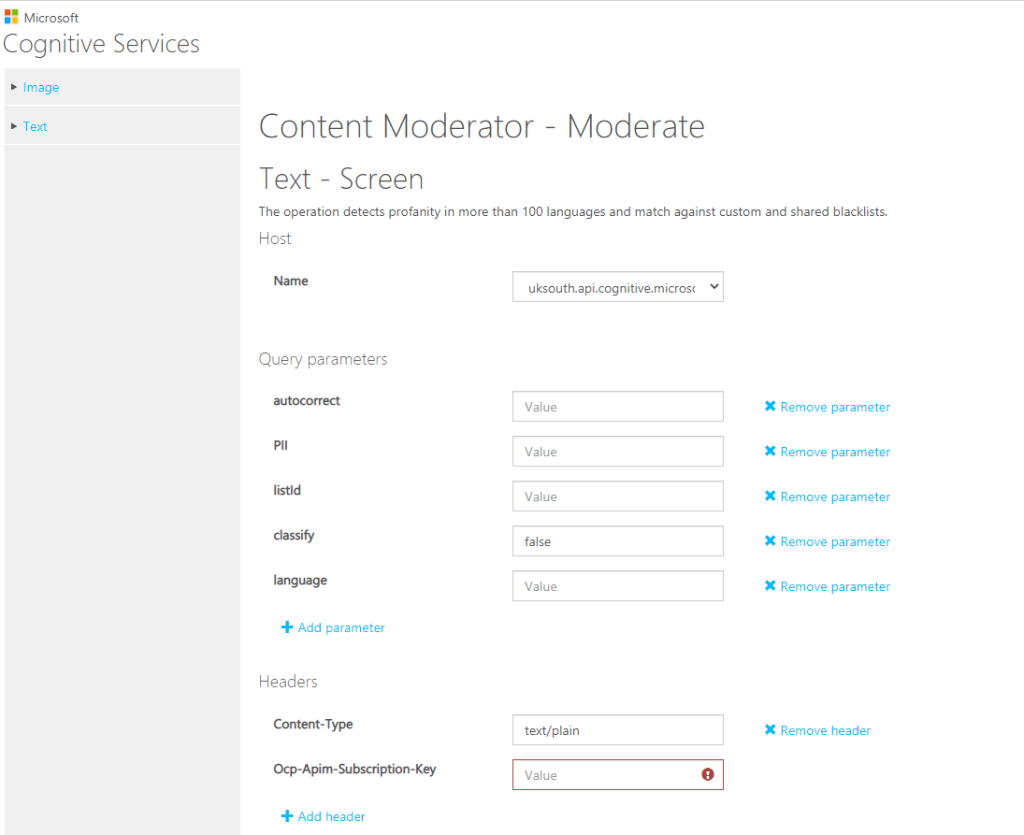

Next we will be presented with this page where we can enter our API Key we saved from earlier:

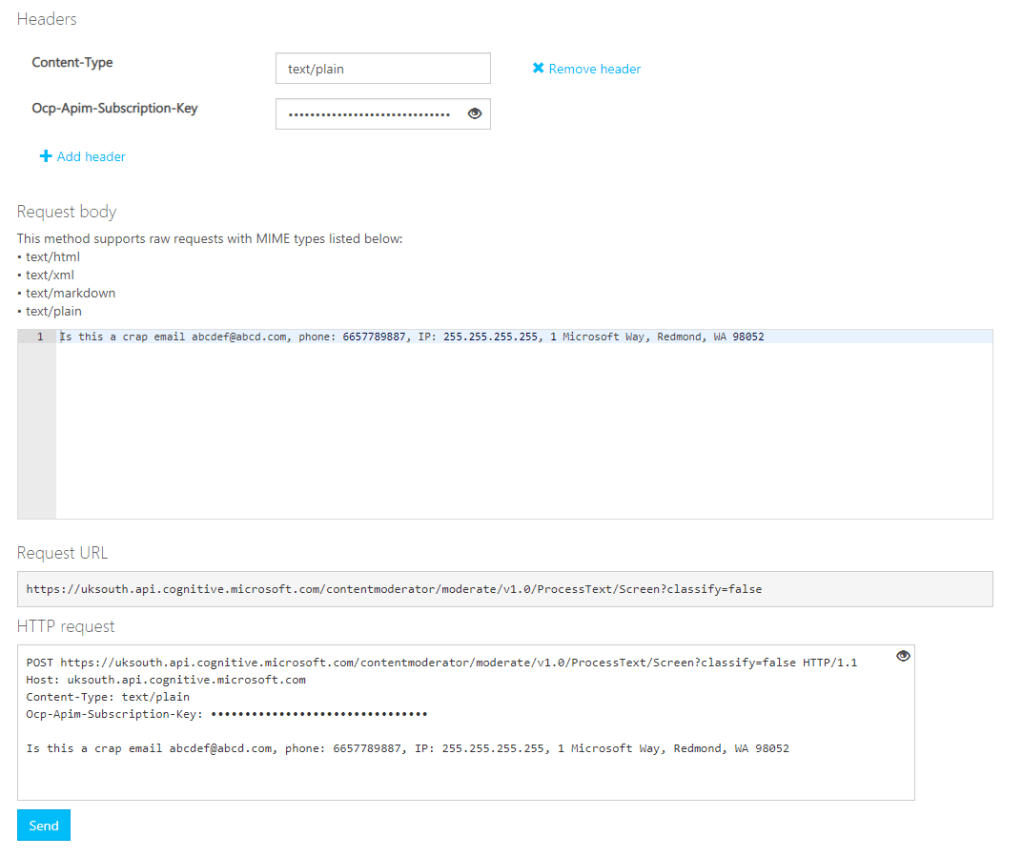

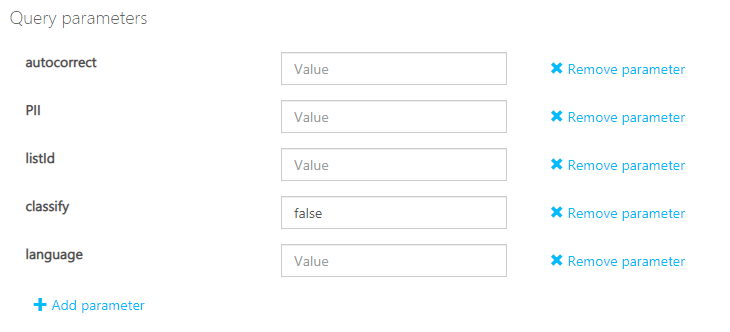

Next if we scroll down a little, we will see that some random text has been placed in the window to test our an API call with:

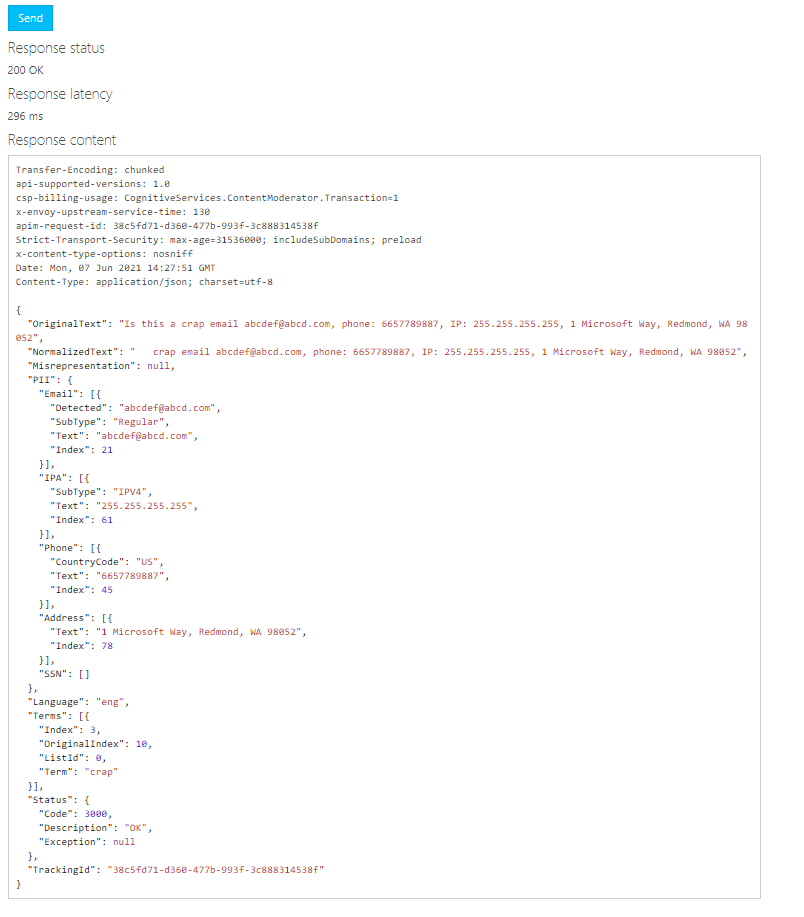

We will then be presented with the output as follows:

Here we can see things like Email, IP, Phone and Address information has been detected in the output as PII data, meaning it is personally identifiable.

We can also see that Language has been detected.

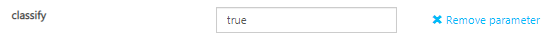

Now if we change one attribute such as changing classify to TRUE:

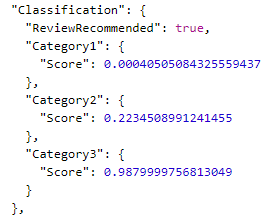

We can now see some classification predictions based on three categories:

Category 2: Refers to potential presence of language that may be sexually suggestive

Category 3: Refers to potentially offensive language

A score between 0 and 1 will be allocated, the closer to 1 then the higher probability that the language may require review.

As you can see in our example, the Category 3 scored quite high and thus the ‘ReviewRecommended’ flag has been set to True.

Content Moderator Review Tool

Microsoft have creates a basic application to show how such a content moderation tool might be utilised here: https://aka.ms/contentmoderator-website, or alternatively you can use these API’s directly within your own coded application, either way I do hope you have found this article informative.

For more information on the concepts of Text Moderation please take a look a this article: https://docs.microsoft.com/en-us/azure/cognitive-services/content-moderator/text-moderation-api